IF one could equate faster typing with velocity, engineering practices perhaps would not matter in the world of software development productivity. Thankfully, there are reasons that most organizations do not use Words Per Minute in our evaluation process when hiring new software developers. Slamming out low quality code and claiming progress, be it story points, or merely finished tasks on a GANTT chart, is a fast track to creating boat anchors that hold back companies rather than pushing them forward.

Without proper engineering practices, you will not see the benefits of agile software development. It comes down to basic engineering principles. A highly coupled system – be it software, mechanical, or otherwise, provides more vectors over which change in one part of the system can ripple into other parts of the system. This is desirable to a degree – you need your transmission to couple to the engine in order for the engine to make a car move. But the more coupling beyond the minimum you need in order to make the system work, the more the overall system destabilizes. If the braking system suddenly activated because a Justin Bieber CD was inserted into the car stereo, you would probably see that as a pretty bad defect. And not just because of the horrible “music” coming out of the speakers.

So what are the specific engineering practices? Some are lower level coding practices, others are higher level architectural concerns. Most rely on automation in some respect to guard against getting too complacent. While I generally loathe to use the term “best practices” due to the fear that someone might take these practices and try to apply them in something of a cargo-cult manner, these are some general practices that seem to across a broad section of the software development world:

Test Driven Development and SOLID

While detractors remain, it has ceased to be controversial to suggest that the practices that emerged out of the Extreme Programming movement of the early 2000s are helpful. Test driven development as a design technique selects for creating decoupled classes. It is certainly possible to use TDD to drive yourself to a highly-coupled mess, given enough work and abuse of mocking frameworks. However, anyone with any sensitivity to pain will quickly realize that having dozens of dependencies in a “God” class makes you slow, makes you work harder to add new functionality, and generally makes your tests brittle and worthless.

To move away from this pain, you write smaller, testable classes that have fewer dependencies. By reducing dependencies, you reduce coupling. When you reduce coupling, you create more stable systems that are more amenable to change – notwithstanding the other benefits you get from good test coverage. Even if you only used TDD for design benefits- and never used the tests after initially writing them, you get better, less coupled designs, which leads to greater velocity when you need to make changes. TDD doesn’t just help you in the future. It helps you move faster now.

Indeed, TDD is just one step on the way to keeping your code clean. Robert Martin treats the subject in much more depth in his book, Clean Code. Indeed, he calls all of us out to be professionals, making sure that we keep our standards and don’t give into the temptation to simply write a bunch of code that meets external acceptance criteria, but does so at the cost of internal quality. While you can, in theory, slap some code together that meets surface criteria, it is false economy to assume that bad code today will have a positive effect on velocity beyond the current iteration.

Of course, having good test coverage… particularly good automated integration, functional, performance, and acceptance tests, has a wonderful side effect of forming a robust means of regression testing your system on a constant basis. While it has been years since I have worked on systems that lacked decent coverage, from time to time I consult for companies that want to “move to agile”. Almost invariably, when I do this, I find situations where a captive IT department is proposing that an entire team spend 6 months to introduce 6 simple features into a system. I see organizations that have QA departments that have to spend another 6 months manually regression testing. TDD is a good start, but these other types of automated testing are needed as well to keep the velocity improvements going – both during and after the initial build.

Simple, but not too simple, application architecture (just enough to do the job)

While SOLID and TDD (or BDD and some of the ongoing improvements) are important, it is also important to emphasize that simplicity specifically as a virtue. That is not to say that SOLID and TDD can’t lead to simplicity – they certainly can, especially in the hands of an experienced practitioner of the tool. But without a conscious effort to keep things simple (aka apply the KISS principle – keep it simple and stupid), regardless of development technique, excess complexity creeps in.

There are natural reasons for this. One of which is the wanna-be architect effect. Many organizations have a career path where, to advance, a developer needs to advance to the title of architect – often a role where, at least it is perceived, that you get to select design patterns and ESB buses without having to get your hands dirty writing code. There are developers who believe that, in order to be seen as an architect, you need to use as many GoF patterns as possible, ideally all in the same project. It is projects like these where you eventually see the “factory factories” that Joel Spolsky lampooned in his seminal Architecture Astronaut essay. Long story short, don’t let an aspiring architecture astronaut introduce more layers than you need!

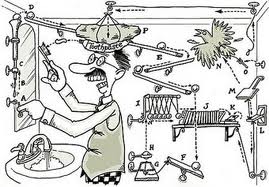

It doesn’t just need to be a wanna-be architecture astronaut that creates a Rube Goldbergesque nightmare. Sometimes, unchecked assumptions about non-functional requirements can lead a team to creating a more complex solution than actually needed. It could be anything from “Sarbanes Oxley Auditors Gone Wild” (now there is a blog post of it’s own!) requiring using an aggressive interpretation of the law to require layers you don’t really need. It could be being asked for 5 9s of reliability when you only really need 3. These kinds of excesses show up all the time in enterprise software development, especially when they come from non-technical sources.

The point is this – enterprise software frequently introduces non-functional requirements in something of a cargo-cult manner “just to be safe”, and as a result, multiplies the cost of software delivery by 10. If you have a layer being introduced as the result of a non-functional requirement, consider challenging it to make sure it is really a requirement. Sometimes it will be, but you would be surprised how often it isn’t.

Automated Builds, Continuous Integration

If creating a developer setup requires 300 pages of documentation, manual setup, and other wizardry to get right, you are likely to move much slower. Even if you have unit tests, automated regression tests, and other practices, lacking an automated way to build the app frequently results in “Works on My Machine” syndrome. Once you have a lot of setup variation, which is what you get when setup is manual, defect resolution goes from straightforward process to something like this:

- Defect Logged by QA

- Developer has to manually re-create defect, spends 2 hours trying, unable to do so

- Developer closes defect as “unable to reproduce”

- QA calls developer over, reproduces

- Argument ensues about QA not being able to setup the environment correctly

- Developer complaining “works on my machine”

- 2 hour meeting to resolve dispute

- Developer has to end up diagnosing the configuration issue

- Developer realizes that DEVWKSTATION42 is not equivalent to LOCALHOST for everyone in the company

- Developer walks away in shame, one day later

Indeed, having builds be automated, regular, and integrated continuously can help avoid wasting a day or five every time a defect is logged. It should not be controversial to say that this practice increases velocity.

Design of Software Matters

Design isn’t dead. Good software design can help lead to good velocity. Getting the balance wrong – too simple, too complex, cripples velocity. Technical practices matter. Future articles in this velocity series will focus on some of the more people related ways to increase velocity, and they are certainly important. But with backward engineering practices, none of the things you do on the people front will really work.